- AI, But Simple

- Posts

- Graph Neural Networks (GNNs), Simply Explained

Graph Neural Networks (GNNs), Simply Explained

AI, But Simple Issue #99

Graph Neural Networks (GNNs), Simply Explained

AI, But Simple Issue #99

Hello from the AI, but simple team! If you enjoy our content and custom visuals, consider sharing this newsletter with others or upgrading so we can keep doing what we do.

Traditional Neural Networks are reputable and well-integrated models used to process text, video, and image data.

Vectors are used as numerical inputs into neural networks, where each component takes the form of a single neuron in a network. The models themselves rely on inputs that are fixed in size and shape.

A 224x224 image always has 224x224 pixels. A sentence can be padded to a fixed length. The structure is consistent, and that consistency is something traditional networks depend on.

However, traditional neural networks are ineffective for particular types of structure, which are found surprisingly often in our daily lives.

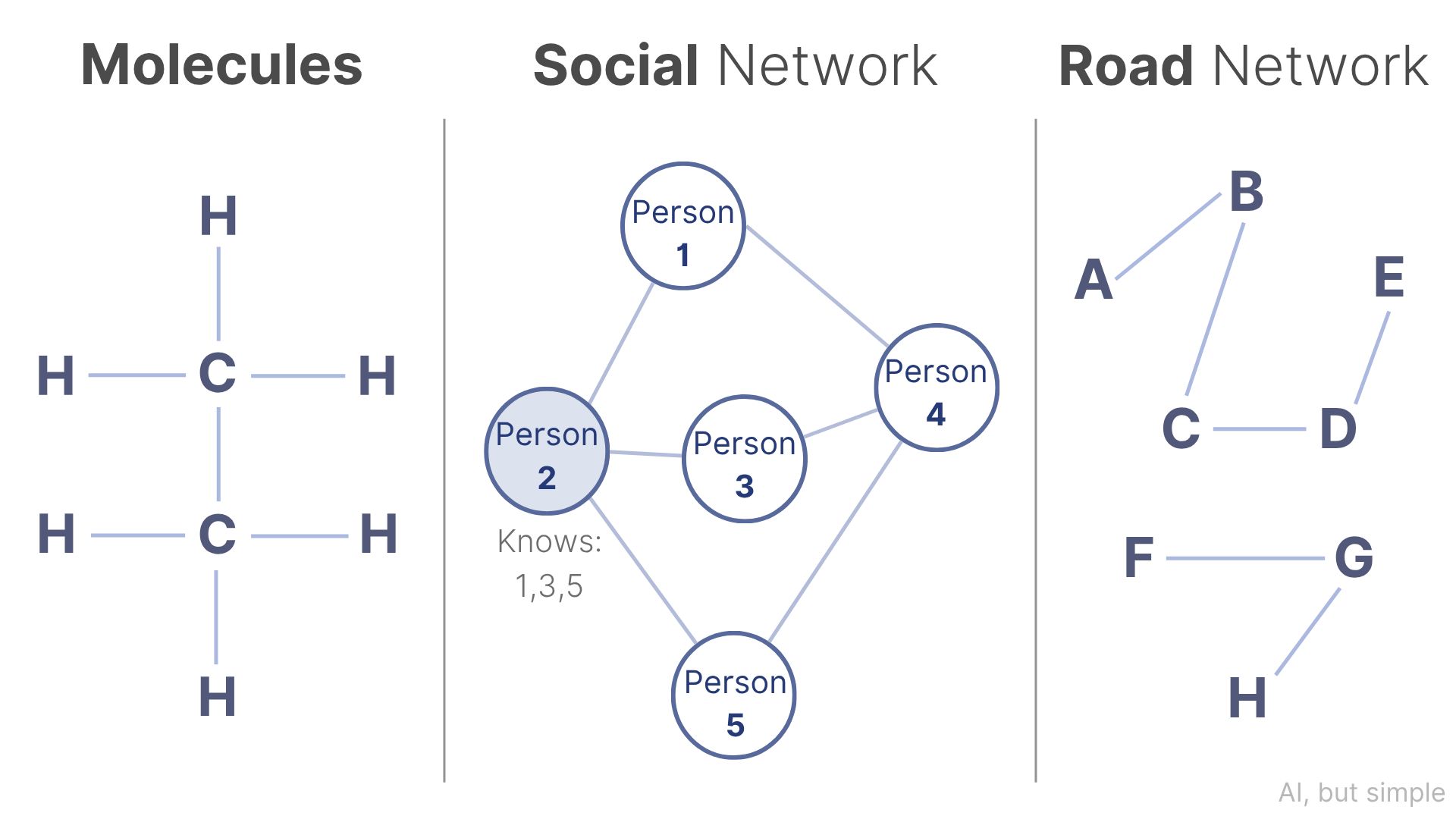

Consider a molecule. Or a social network. Or a city's road map.

These are graphs: collections of nodes (entities) and edges (relationships between them).

Molecules have varying numbers of atoms, and road networks look different in varying countries.

So, how do you feed something so irregular into a neural network?

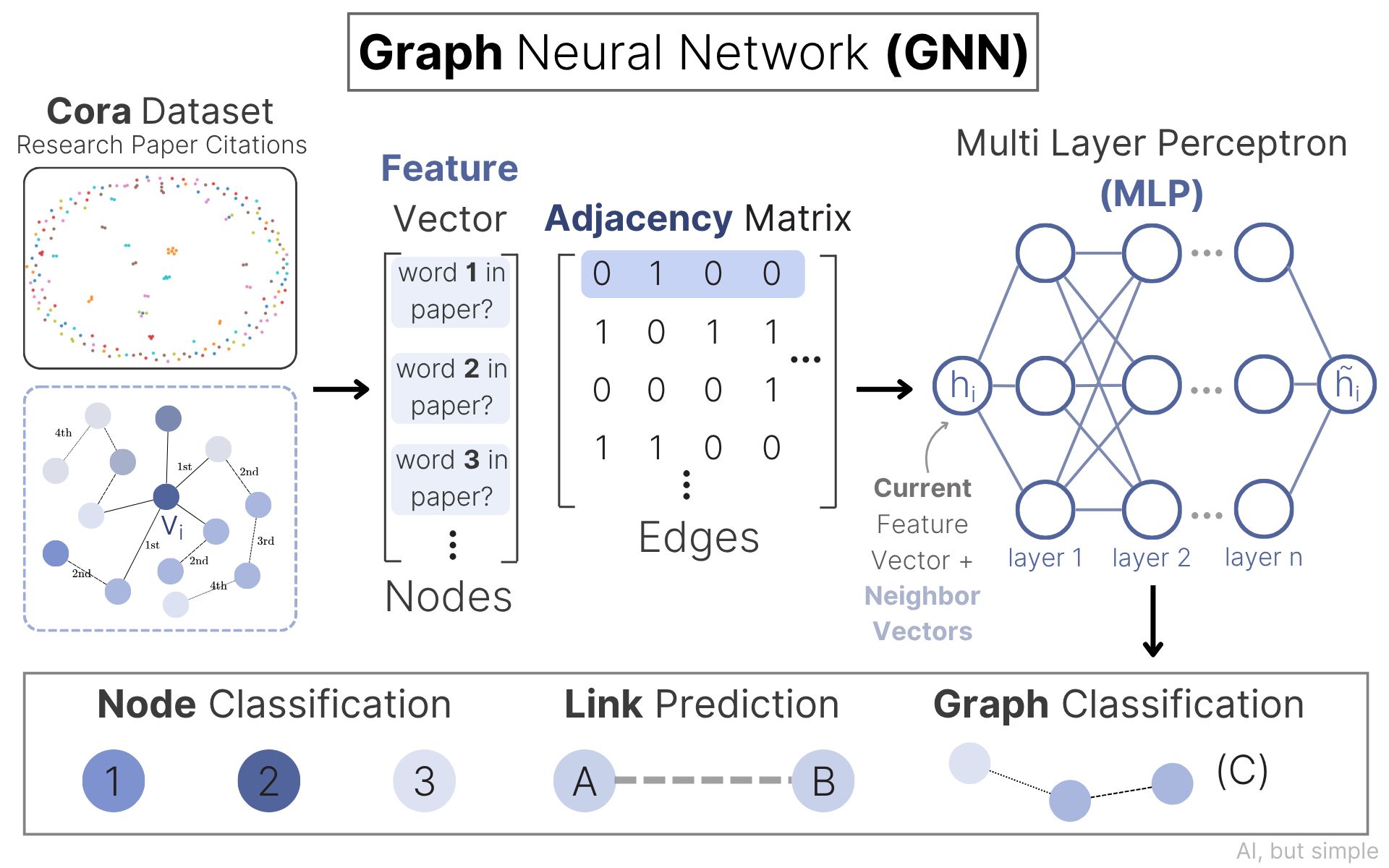

Graphs can model the connection between two, three, or many different things all at once, but can we extend our understanding of their current state to train models to classify characteristics and even predict future connections?

As we'll see, the solution borrows a surprisingly familiar idea.

In this issue, we will provide a highly visual breakdown of how Graph Neural Networks (GNNs) operate on graph data and produce results that are newly useful for real-world applications.